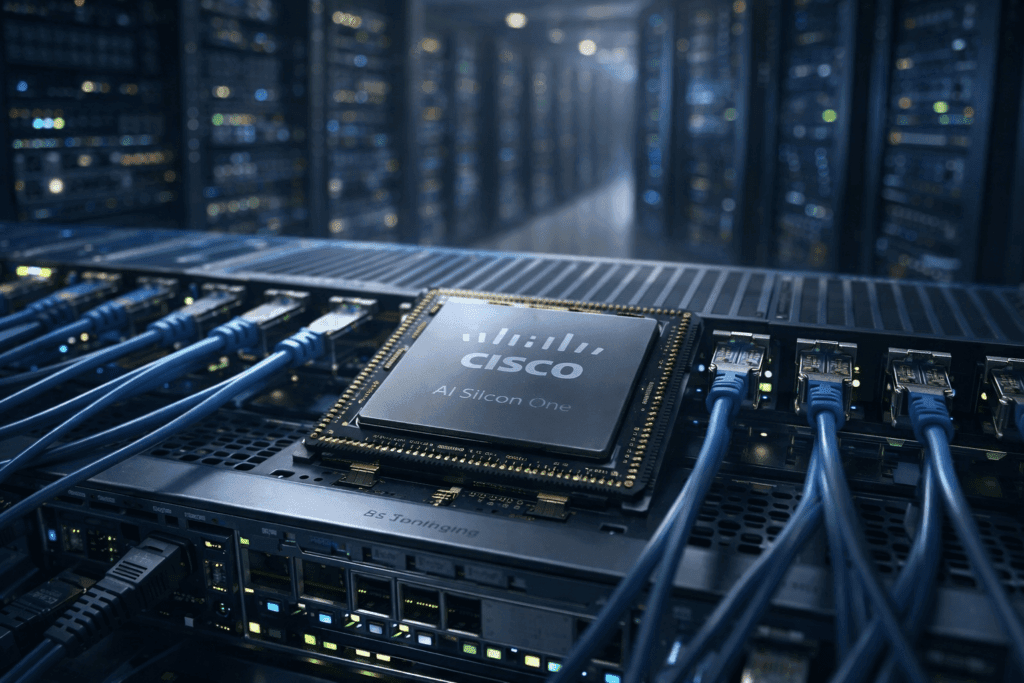

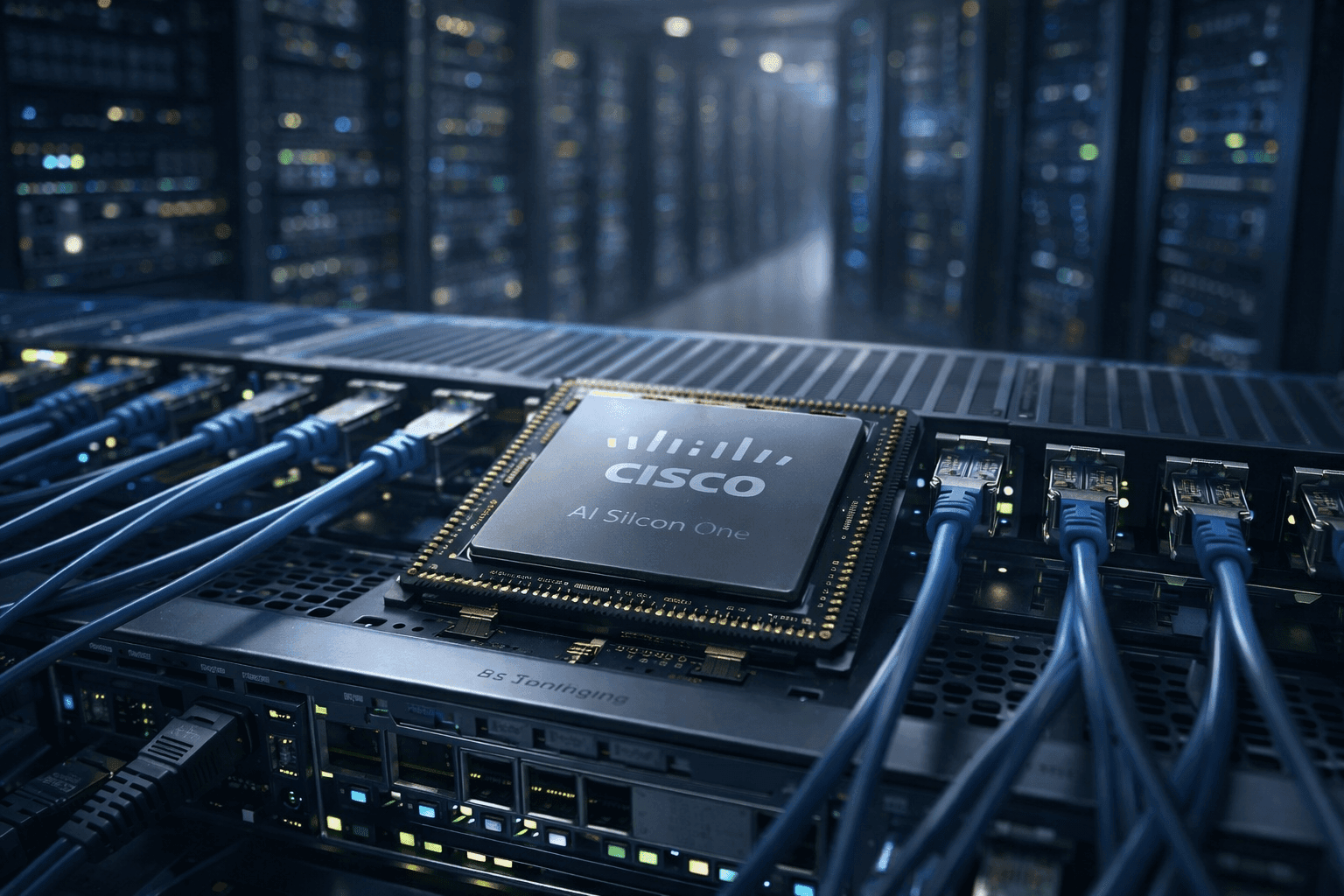

Cisco AI networking chip 2026 marks a new front in the global AI infrastructure race

4 min read

Cisco AI networking chip 2026 has officially entered the competitive landscape, representing a significant strategic shift for how artificial intelligence workloads are handled at scale. Rather than focusing exclusively on compute silicon, this development emphasizes networking as a core performance driver—a transition that could redefine efficiencies in global AI data centers.

The new offering, part of Cisco’s expanding Silicon One portfolio, brings networking silicon to the heart of AI acceleration infrastructure. As machine learning models increase in size and complexity, more data must be moved swiftly and intelligently between processors, memory pools, and storage layers. The result: networking performance is becoming just as important as raw computing power.

Cisco AI networking chip 2026 explained: What sets it apart

Cisco AI networking chip 2026—internally named Silicon One G300—introduces advanced routing and traffic management features designed for AI workloads that span distributed systems. Unlike traditional network chips that prioritize basic packet forwarding, this generation incorporates dynamic congestion mitigation, smart rerouting, and optimized throughput scaling based on real-time traffic demands.

These capabilities help reduce latency spikes and packet loss during intense AI training and inference processes. In practical terms, organizations running large AI models will see more stable performance across clusters, especially when GPUs and accelerators are operating near capacity.

Cisco’s strategy reflects a deeper industry realization: AI systems are only as fast as the networks that connect them.

Why AI networking silicon matters more than ever

Historically, discussions about AI performance centered around GPUs, TPUs, and dedicated AI accelerators. However, as models grow larger and distributed computing becomes the norm, the network interconnect is emerging as the new bottleneck.

In real-world AI deployments, data frequently moves between dozens or even hundreds of interconnected processors. If network latency or congestion isn’t addressed at the silicon level, the entire pipeline suffers — even if individual processors are powerful. Networking inefficiencies can stall training progress, inflate costs, and limit scaling.

By optimizing networking silicon with features tailored to AI traffic patterns, Cisco aims to reduce these barriers. This chip is not focused on raw computations per second, but on efficiently connecting high-performance components in complex systems.

How Cisco stacks up against Broadcom and Nvidia

The release of Cisco AI networking chip 2026 immediately places it in competition with established networking silicon leaders, particularly Broadcom and Nvidia.

Broadcom’s portfolio has long dominated high-capacity switching and routing within data centers. Its silicon is known for stability, throughput, and integration in large-scale environments. Nvidia, meanwhile, has been building its own networking solutions tightly integrated with its GPU ecosystem, emphasizing seamless acceleration across compute and interconnect layers.

Cisco’s strategy differs in two key ways:

- Hardwareagnostic networking silicon: Designed to work across diverse compute ecosystems rather than only within one vendor’s stack.

- AI-centric traffic intelligence: Features such as adaptive rerouting and congestion prediction are built explicitly for AI workloads.

This gives Cisco the potential to appeal to enterprises and cloud providers that want flexible network layers capable of fitting into existing architectures without forcing a wholesale shift to a single vendor’s hardware.

Potential impact on data center design and operations

The introduction of Cisco AI-optimized networking silicon has broader implications for data center architecture:

- Reduced operational bottlenecks: By smoothing data flows, organizations can run more complex models without bottlenecks affecting end-to-end performance.

- Improved scalability: Networking that adapts in real time enables easier scaling across thousands of nodes.

- Cost efficiency: Better routing efficiency can reduce the need for oversized hardware clusters or redundant systems.

CIOs and infrastructure architects are already watching this space closely. Many believe that future high-performance clusters will be designed not around processors alone, but as balanced systems where networking and computing co-evolve.

Market reactions and what analysts are saying

Although Cisco AI announcement was technical in tone, industry analysts have pointed out that the move reflects shifting priorities in the AI economy. Rather than treating networking as an afterthought, Cisco’s strategy signals recognition that data throughput and latency management are strategic assets.

Some analysts are already forecasting that integrated networking silicon could become a key differentiator when organizations choose vendors for AI deployment. While Nvidia remains strong due to its compute leadership and software ecosystem, Cisco’s approach opens doors for hybrid and heterogeneous systems—where different compute blocks work together seamlessly across a shared network layer.

Real-world use cases for enhanced networking silicon

The new networking chip is expected to impact multiple categories of AI usage:

- Large-scale model training: Faster synchronization between clusters means reduced training times.

- Real-time inference systems: Lower latency supports applications like autonomous systems, financial trading, and live analytics.

- Edge-to-cloud deployments: Intelligent network silicon can help hybrid architectures coordinate workloads between local edge units and centralized cloud resources.

These are not theoretical gains — enterprise adoption of AI continues to grow, and infrastructure must keep pace.

What happens next with Cisco’s AI networking tech

Cisco AI is positioning the G300 as a foundational technology for future AI systems. While no pricing or customer commitments have been disclosed yet, the company has indicated that early trials are underway with select enterprise and cloud partners.

Looking forward, the development could influence:

- New hardware platforms designed around network intelligence

- Ecosystem partnerships focused on integrated compute and networking

- Open standards for AI-optimized network operations